Article

AI Content Strategy & Governance: Why Source of Truth Matters

Summary:

Enterprises are deploying AI tools faster than they're sorting out the content those tools depend on. The result is AI agent sprawl - multiple tools pulling from different, ungoverned sources, confidently producing inaccurate outputs at scale. In regulated industries, that's not just an inconvenience. The fix isn't a better AI tool. It's a governed, structured single source of truth that every AI agent draws from. Author-it's relational database architecture and AION publishing output are built for exactly this - clean, metadata-rich, AI-ready content delivered automatically, without custom pipeline work.

When AI Agents Multiply, the Content They Pull From Starts to Matter

There's a quiet problem taking shape inside a lot of enterprises right now.

Teams are rolling out AI tools - chatbots, copilots, internal knowledge assistants, customer-facing agents. Each one needs content to function. Each one needs to give accurate, compliant answers. And each one is, often without anyone fully realising it, pulling from a different place.

Some are scraping SharePoint. Some are indexing a help portal that hasn't been updated since last year. Some are reading PDFs that contain three versions of the same procedure, all slightly different.

This is content sprawl. And when AI agents hit it, the consequences aren't just annoying - in regulated industries, they can be serious.

The source of truth problem isn't new. AI just made it urgent.

Documentation teams have wrestled with content sprawl for decades. Multiple systems, duplicated content, version confusion, inconsistent terminology. Most organisations manage it imperfectly - a mix of process discipline and people who know which version to trust.

That approach worked when humans were the only ones reading the content. Humans could apply judgement. They'd cross-reference, ask a colleague, check the date on the document.

AI agents don't do that. They retrieve and respond. The quality of what they say is directly proportional to the quality of what they're reading.

Feed them clean, structured, governed content? They perform well. Feed them fragmented, inconsistent, unversioned content? They confidently say the wrong thing - at scale, at speed.

The fix isn't a better AI tool. It's better content infrastructure.

Here's the framing that resonated when we talked about this with a tier-one healthcare company recently.

Their writing team is presenting to global executives on AI content strategy. The immediate question from their compliance-focused documentation manager wasn't "which AI tool should we buy?" It was: "How do we handle regional regulatory requirements across all of this?"

That's the right question. Because the AI tool is the easy part. The content it draws from is the hard part.

For a regulated organisation, that content needs to be:

- Accurate - the current, approved version, not a stale draft

- Structured - organised in a way AI systems can navigate and understand

- Rich in metadata - so the AI knows what the content is about, who approved it, and when it was last reviewed

- Governed - with audit trails, version control, and approval workflows that mean you can trust what's in there

Most organisations have none of this in place before they start deploying AI. They deploy first, discover the problem second.

Why the database architecture underneath your CCMS actually matters

This is where the conversation gets technical - but stick with it, because it's important.

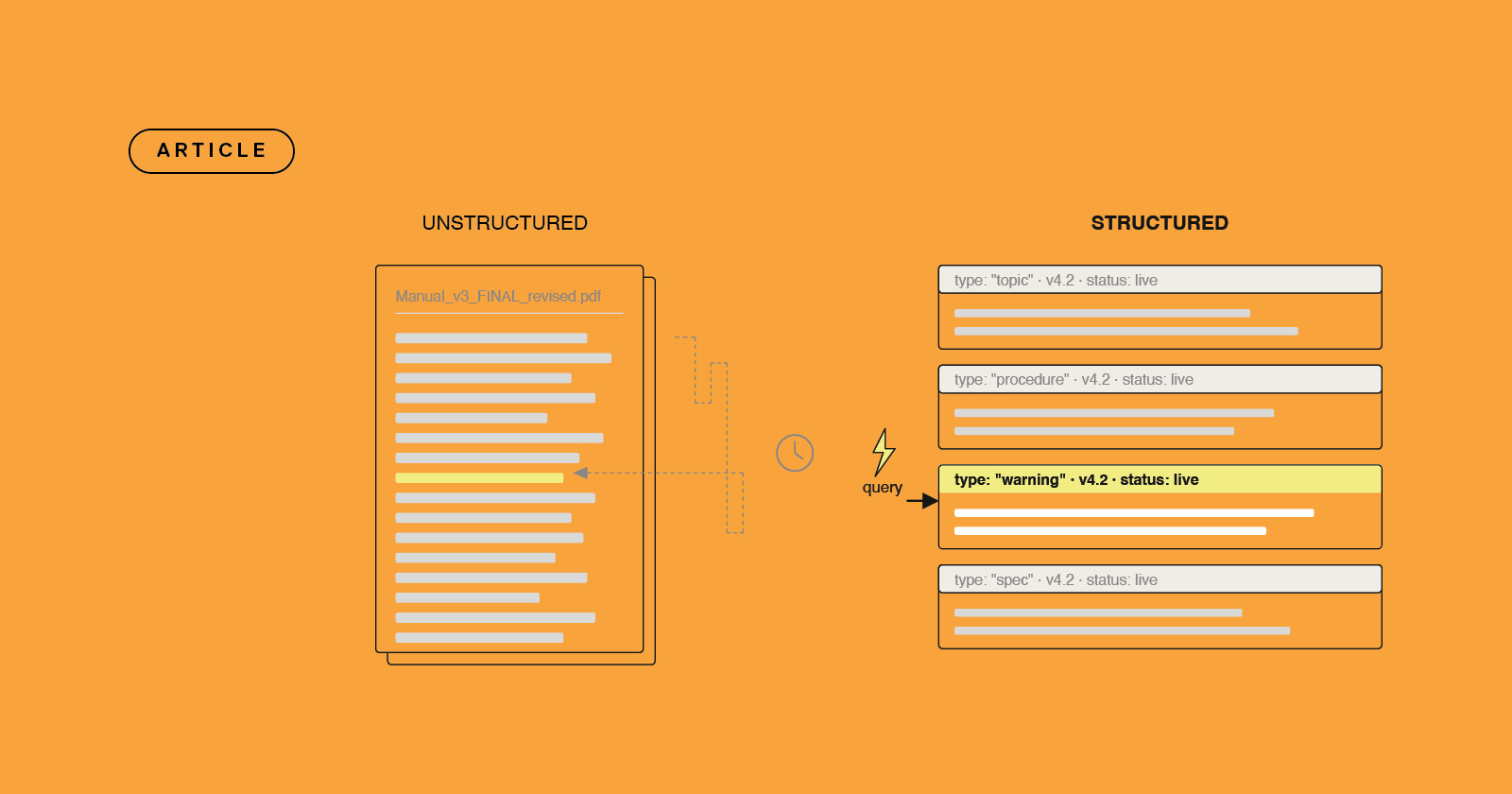

Most CCMS platforms store content in a file-based or XML-based structure. That's fine for publishing to PDFs or help portals. But when you want to publish content that an AI can consume intelligently - with full context, resolved variables, complete metadata, accurate hierarchy - file-based systems start to show their limits.

Author-it is built on a relational database. That architecture is the reason it can publish content for AI knowledge graphs that is genuinely rich in metadata - automatically, without requiring the customer to configure anything special, but extensible for organisations that want to add their own taxonomy layers on top.

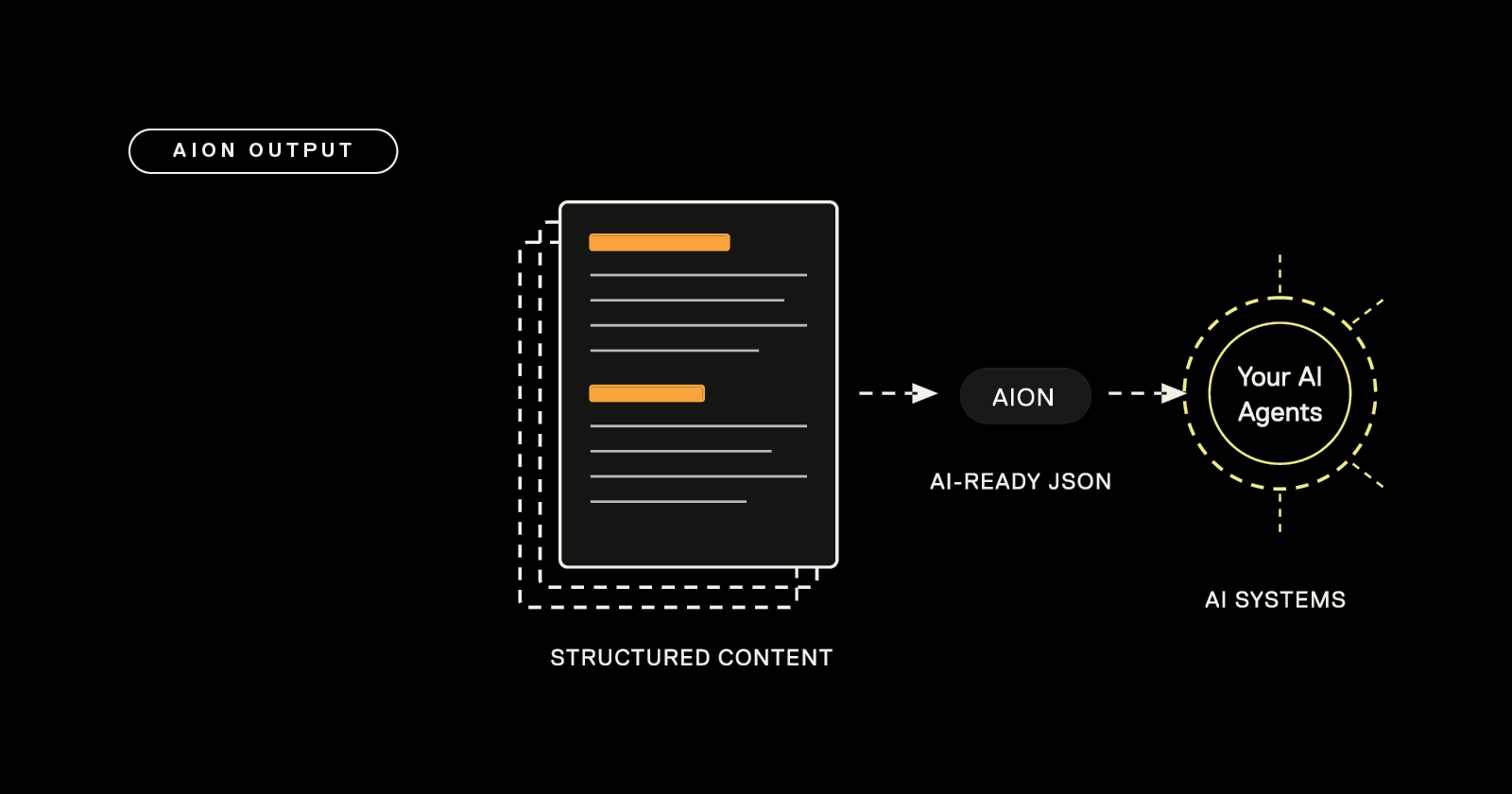

When we publish to AION - our structured JSON output format for LLM consumption - the output isn't just the text. It's the full content hierarchy. Books, sub-books, topics. Resolved variables. Metadata including object IDs, authorship, approval status, and timestamps. Image alt text and descriptions. All of it, structured and clean, delivered via URL for automated ingestion.

That's not something you can retrofit onto a document-based or DITA-based system easily. It requires the right foundation underneath.

What AI agent sprawl actually looks like in practice

Here's a scenario that plays out in enterprises regularly.

An organisation deploys three AI tools in a year:

- A customer-facing chatbot for product support

- An internal knowledge assistant for sales and account teams

- A regulatory compliance assistant for their legal and documentation teams

Each team chose their tool independently. Each tool was pointed at whatever content was available. Nobody coordinated the source.

Twelve months later: the chatbot is giving product guidance based on a spec sheet that was superseded eight months ago. The sales assistant is quoting a pricing structure that changed two quarters back. The compliance assistant is returning procedures from a jurisdiction that's since been updated.

None of the AI tools are broken. The content infrastructure is broken.

The fix - in every case - is the same: a single governed source of truth that all AI agents draw from, with version control and metadata they can trust.

The competitive reality for content platforms

Not every CCMS can do this. That's worth being direct about.

DITA-based platforms like MadCap IXIA or Heretto are built around XML structures. They're powerful for traditional publishing. But AI-ready output - genuinely structured, metadata-rich JSON that an LLM can reason over - isn't a native capability for XML-first tools. It's something you'd need to build a pipeline around.

Document-based tools like MadCap Flare or Paligo are even further from this. They're excellent for single-author or small-team publishing. They're not designed for the kind of relational content structure that AI agents need.

Author-it's architecture - the relational database, the component model, the metadata layer - was built for this kind of content management. Not because we anticipated the AI wave specifically, but because regulated enterprise customers have always needed this level of structure. The AI use case maps almost perfectly onto what we were already doing.

AION is the output format that bridges the two worlds: governed, structured content in Author-it, published as clean JSON ready for any LLM or AI agent pipeline.

What to actually do about it

If you're a content strategy leader in a regulated industry and you're thinking about AI, here's the practical sequence:

1. Audit your current source landscape: Where does your content actually live? How many systems? How duplicated is it? What's the version control situation? Be honest. Most audits surface more chaos than expected.

2. Fix governance before you fix the AI tooling: Content governance isn't glamorous. But it's the work that makes AI reliable. Establish a single source of truth, put approval workflows in place, and get your metadata in order before you point an AI at anything.

3. Choose content infrastructure that can publish to AI natively: Not every CCMS has this capability. Ask your platform vendor specifically: what does your AI-ready output look like? How is it structured? What metadata is included? What does an LLM actually receive when it ingests your content?

4. Plan for agent sprawl: The number of AI tools your organisation runs will only increase. Each one needs a content source. Centralise early. It's much easier to govern one source than to retrofit governance onto five.

The bottom line

AI doesn't create good content strategy. It amplifies whatever content strategy you already have.

If your content is accurate, structured, governed, and rich in metadata - AI will make it more useful, more accessible, and more impactful. If your content is fragmented, inconsistently versioned, and siloed across systems - AI will amplify that too. Faster than any human could.

The companies that will use AI well in regulated environments aren't the ones that deployed the most AI tools fastest. They're the ones that sorted their content foundation first.

Is your content AI-ready?

The structured, governed content that AI agents need is the same content your documentation team already produces - if it's being managed correctly. Talk to us about what that foundation looks like in practice. Book a demo →

Frequently Asked Questions

Q: What is AI content governance?A: AI content governance is the practice of ensuring that the content AI systems consume is accurate, up to date, appropriately structured, and traceable. It covers version control, approval workflows, metadata management, and the processes that keep content trustworthy at its source before an AI agent ever touches it. In regulated industries, this is the difference between AI that helps and AI that creates compliance risk.

Q: Why does content governance matter more when you're using AI?

A: AI agents don't apply human judgement. A technical writer reading an outdated procedure might instinctively cross-check it. An AI agent will retrieve it and respond confidently with whatever it finds. The more AI tools an organisation deploys, the more it amplifies the quality - or the problems - already present in its content. Governance closes that gap before it becomes a liability.

Q: What is content sprawl, and how does it affect AI performance?

A: Content sprawl occurs when the same or related content exists across multiple systems - SharePoint, legacy portals, PDFs saved in multiple locations. Each version may differ slightly. AI agents have no reliable way to determine which version is current or authoritative, so they use whatever they find. In regulated industries, this produces compliance risk and potentially incorrect AI outputs at scale.

Q: What is the difference between a document-based CMS and a structured CCMS for AI readiness?

A: A document-based CMS stores content as files - Word documents, PDFs, pages. A structured CCMS stores content as components with metadata, relationships, and version tracking built in. For AI consumption, structured content can be published with full hierarchy, resolved variables, authorship, and approval status intact. Document-based content cannot provide that context natively, which means the AI receives less signal to reason with.

Q: What is AION, and how does it relate to AI content governance?

A: AION is Author-it's structured JSON publishing output, designed specifically for consumption by LLMs, RAG pipelines, AI agents, and enterprise knowledge systems. It delivers governed, structured content - with full hierarchy, metadata, and resolved variables - as clean JSON via URL for automated ingestion. AION is available as part of Author-it's 2026.R1 release and requires no custom pipeline development.

Q: Can DITA-based CCMS platforms publish AI-ready content?

A: DITA-based tools produce structured XML, which can be transformed for AI consumption, but generating clean, metadata-rich JSON that LLMs can reason over typically requires additional pipeline work. It is not a native capability for most XML-first platforms. Author-it's relational database architecture enables AI-ready JSON output as a built-in publishing format without requiring custom infrastructure.

Q: What should we audit before deploying AI agents across our content?

A: Before deploying AI tools, audit how many systems your content lives across, whether version control is reliable, whether content has meaningful metadata attached, what your approval workflow looks like, and whether a single authoritative source exists. Most audits surface more fragmentation than teams expect. Identifying this before deployment is significantly easier than remedying it after an AI tool has been answering questions from a stale source.

Q: Is AI content governance only relevant for large enterprises?

A: No. The consequences of poor content governance scale with the volume and criticality of content. Smaller teams with tidy documentation may not feel much pain initially. But regulated organisations with multiple product lines, regional compliance requirements, and several AI tools deployed across departments will. The right time to establish governed content infrastructure is before sprawl becomes unmanageable.

Q: How does Author-it help organisations maintain a single source of truth for AI?

A: Author-it stores all content in a central Library with version control, approval workflows, role-based access, and full audit trails. AION publishes that governed content as structured JSON for AI consumption. Every AI agent drawing from Author-it draws from the same accurate, approved, metadata-rich source automatically - without requiring teams to maintain separate AI-specific content stores.

Q: How many AI tools does the average enterprise run, and why does that matter for content governance?

A: Enterprises in regulated industries are increasingly running multiple concurrent AI deployments - customer support agents, internal knowledge assistants, compliance tools, productivity copilots. Each one needs content to function reliably. The governance challenge compounds with each additional tool. Centralising the content source before deployment is significantly more manageable than retrofitting governance across five or six tools after the fact.