Article

Why RAG pipelines fail on enterprise documentation

Read time:

8 min

Why it matters:

RAG failures in enterprise AI are almost always a content quality problem, not a model problem.

Who it's for:

IT leads and AI architects running or planning enterprise RAG deployments.

Summary:

RAG pipelines fail on enterprise documentation not because of model limitations, but because the content they retrieve is unstructured, version-ambiguous, and metadata-poor. The fix is upstream: structured content with typed components, version control, and rich metadata dramatically improves retrieval accuracy and eliminates the most common sources of AI hallucination in enterprise deployments.

Your RAG pipeline is not broken. Your content is.

You have deployed a retrieval-augmented generation pipeline. The model is capable. The infrastructure works. And the output is still wrong - confidently, fluently, specifically wrong.

This is the most common failure pattern in enterprise AI rollouts right now. Teams invest in model selection, embedding infrastructure, and vector databases. They run a proof of concept. It hallucinates. They tune the model. It still hallucinates. They write better prompts. Still hallucinating.

The reason is almost always the same: the content being retrieved is the problem, not the retrieval mechanism or the model.

Retrieval-augmented generation works by fetching the most relevant chunks of your content and passing them to a language model as context. The model then generates an answer grounded in what was retrieved. If what gets retrieved is ambiguous, outdated, inconsistent, or decontextualised - the model builds a confident answer on a bad foundation. That is a hallucination. And no amount of model tuning fixes a data quality problem.

The five content problems that break RAG

Based on where enterprise RAG deployments consistently fail, these are the content-side root causes:

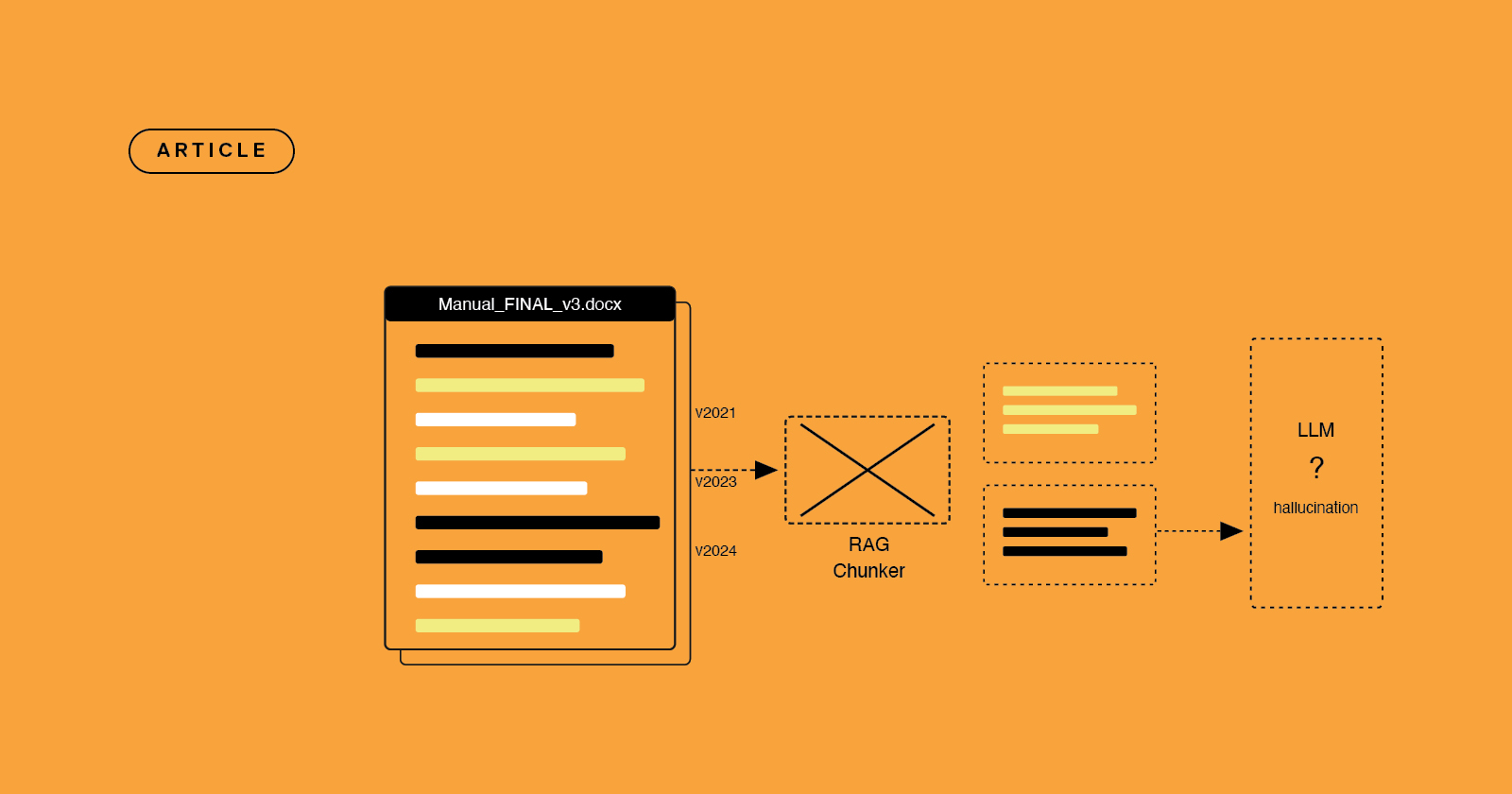

1. Version ambiguity

Your content repository contains three versions of the installation procedure - the 2021 original, a 2023 update, and a 2024 revision. The RAG system retrieves all three as candidates. The model synthesises them. The answer reflects a mix of procedure states that never existed simultaneously.

Structured content with proper release state management eliminates this. Only approved, current content gets published to the retrieval layer. Superseded versions exist in version history but do not contaminate retrieval.

2. Decontextualised chunks

When a RAG system splits your documentation into chunks for embedding, it typically cuts by character count or paragraph break - not by semantic unit. A step that makes sense as part of a procedure becomes meaningless when extracted. A warning that applies to a specific product configuration loses its scope.

Structured content is already chunked at the component level. Topics, procedures, warnings, and specifications are discrete semantic units with defined scope. When retrieved, they make sense in isolation because they were designed to.

3. Inconsistent terminology

Document A calls it a control module. Document B calls it a controller unit. Document C calls it a command interface. They are the same thing - but the retrieval system does not know that. A query about the control module misses everything in Documents B and C.

Single-source authoring solves this. When every reference to a concept comes from the same component, terminology is consistent by architecture.

4. Missing metadata

This procedure applies to firmware version 4.2, not 3.x. This warning is for EU configurations. This specification is for the enterprise tier. Without metadata, the retrieval layer cannot filter by these dimensions. The wrong content gets retrieved.

A CCMS stores metadata at the component level. Product version, audience, geography, release state, topic type - all available for filtering at retrieval time.

5. Implicit relationships

A human reader understands that the warning on page 12 applies to the procedure on page 11, because they read the document sequentially. A RAG system retrieves individual chunks. The procedure gets retrieved. The warning does not.

Structured content makes relationships explicit. A warning is linked to the procedure it applies to. When retrieved, the relationship travels with the content.

What structured content looks like to a retrieval system

Consider two versions of the same technical documentation.

Version one: a Word document. Forty pages. Continuous prose formatted with headings. Written by multiple authors over several years. Terminology varies. Some sections reference earlier sections. Version status is implied by the file name (Manual_v3_FINAL_revised.docx). No metadata beyond the file properties.

Version two: the same content authored in a CCMS. Each procedure is a discrete component. Each warning is tagged with the procedures it applies to. Product version and configuration scope are metadata fields on every component. Terminology is controlled - every reference to a concept uses the same term because it is the same component. Release state is explicit - published components are current, everything else is in draft or archived.

Both contain the same information. But Version two is dramatically easier for a RAG system to work with. Chunks are semantically bounded. Metadata is available for filtering. Relationships are explicit. Version state is unambiguous.

AION: the direct line from structured content to your AI stack

Author-it's AION output format was built for exactly this problem.

AION generates a structured JSON export of your Author-it content that preserves component hierarchy, metadata, version state, and explicit relationships - ready for direct ingestion into a RAG pipeline, vector database, or AI agent context layer. No preprocessing. No hoping that a PDF chunker recovers structure that was lost in export. No manual metadata enrichment step.

The organisations getting reliable output from their enterprise AI deployments are not doing anything exotic. They have clean, structured, governed content - and a publishing format that delivers it to the AI stack in the shape the AI stack actually needs.

AION is that publishing format for Author-it customers. If you are already running structured content through Author-it, AION makes your AI deployment significantly more straightforward. Find out more at author-it.com/aion.

Where to start

If your RAG deployment is producing unreliable output, the content audit is the right first step - not further model tuning:

- Map the content your RAG system retrieves most - these are the highest-leverage assets to fix first

- Identify version ambiguity - anywhere multiple versions of the same content exist in the retrieval layer simultaneously

- Audit terminology consistency - the same concepts should use the same terms across all documents

- Add metadata before re-embedding - even basic topic type and version tags improve retrieval precision significantly

- Evaluate chunking strategy - if your chunks are crossing semantic boundaries, component-level structured content gives the retrieval layer better input

The Author-it resources section covers these transitions in more detail, including what to look for in a CCMS and how AION integrates with common RAG architectures.

RAG Documentation FAQ

Q: Why does my RAG pipeline produce incorrect answers from my documentation?

A: In most enterprise deployments, inaccurate RAG output is caused by content quality problems rather than model limitations. The most common causes are version ambiguity (multiple document versions in the retrieval layer), decontextualised chunks (retrieval splitting semantic units mid-thought), inconsistent terminology (the same concept named differently across documents), missing metadata (no filters for product version, configuration, or audience), and implicit relationships (warnings and procedures separated at retrieval time).

Q: What is RAG and how does it use documentation?

A: RAG (Retrieval-Augmented Generation) is an AI architecture that retrieves relevant chunks of your content before generating a response. A user asks a question - the system searches a vector database of your embedded content, retrieves the most relevant chunks, and passes them to a language model as context. The accuracy of that answer depends directly on the quality, structure, and metadata of the retrieved content.

Q: What is structured content and why does it improve RAG performance?

A: Structured content is content organised into discrete, typed components - procedures, warnings, specifications, concepts - each with metadata and explicit relationships to other components. This maps directly to what RAG systems need: semantically bounded chunks that make sense in isolation, metadata for filtering, consistent terminology, and clear version state.

Q: How does version ambiguity cause AI hallucinations?

A: When multiple versions of the same document or procedure exist in a retrieval layer simultaneously, a RAG system may retrieve content from different version states and synthesise them into a single answer. The result reflects a combination of procedure states that never existed simultaneously. Structured content with explicit release state management prevents this by ensuring only approved, current components are published to the retrieval layer.

Q: What is AION and how does it help RAG deployments?

A: AION is Author-it's structured JSON publishing output, designed for direct ingestion into RAG pipelines, vector databases, and AI agents. It exports structured content with preserved component hierarchy, metadata, version state, and explicit relationships - without requiring preprocessing or PDF chunking.

Q: Can I fix RAG hallucinations without replacing my content infrastructure?

A: Partial improvements are possible - better chunking strategies, metadata enrichment, hybrid retrieval approaches, and output validation layers can all reduce hallucination rates. But the most reliable fix is upstream: clean, structured, metadata-rich content. If the content going into the retrieval layer is fundamentally unstructured and version-ambiguous, downstream fixes provide diminishing returns.

Q: What metadata should documentation have for better AI retrieval?

A: At minimum: topic type (procedure, warning, concept, specification), product or system version the content applies to, audience or configuration scope, and release state (draft, approved, current, archived). Additional metadata that improves retrieval precision includes geography or locale, product tier, and relationship tags to related components.